AI is useful for data processing & task automation.

However, it has many risks that need to be mitigated or avoided.

Risks

The risks for data processing are due to the lack of interpretability of its output to trust its outputs versus knowing when to not use it. The risks of automation are “hallucinations” that make it not work in unforeseen/untrained circumstances.

Together, these types of risks result in increased costs to customers that need to be minimized.

Costs

Energy: model training and usage

Training costs are usually covered by big tech companies like Microsoft or the AI companies themselves. However, they are starting to realize it’s not profitable long-term, so they are increasing prices for customers. Eventually this will mean that fewer customers will pay for AI from American companies because Chinese AI is cheaper. The environmental impacts of energy for model training and usage are not yet relevant because the US government does not regulate or charge for increased pollution. If we get an administration that does, then this will become relevant, too, and companies will have the same cost-saving incentives. Eventually both will become more expensive, so 1. customers will need to weight the value of AI vs its training and usage costs.

Labor: marketing, development, data labeling, content moderation

AI companies are investing heavily in marketing their products, but with misleading promises that are not technically illegal. This can be improved by 2. communicating the value of the AI for known customer uses.

Software developers are being laid off because managers think their jobs can be done by AI, but they can’t: vibe coding both requires very specific prompts, aka prompt engineering expertise, and trained developers to fix its mistakes. Therefore, companies still need the services of developers, but maybe not full-time over a long period. Whether developers are contracted when needed or hired for longer terms, development of a product can be done when developer(s) can effectively use AI to increase their productivity, meaning 3. designing AI to effectively collaborate with the developers.

Data labeling for supervised training of the AI models and moderation of automatically-generated content tend to be outsourced to countries with no labor laws, thus resulting in exploitation of labor through overworking them and not providing mental health insurance for the trauma caused from harmful content. Supervised training can be reduced by moving away from foundational deep neural network models when possible: much of large language model (LLM) training is to enable it to communicate with users via text, so 4. creating better user interfaces for AI systems would avoid this risk.

For content moderators, they can be supported better by managing the content that is automatically generated: rather than maximizing common metrics like user engagement, more specific business metrics could be targeted with 5. greater interpretability and control of the AI.

Legal: misuse with deep fakes, plagiarism

AI companies could get sued for the impact of its misuse on customers’ businesses, but presumably they avoid this with tricky contract language. Therefore, the main impact on their business is fewer customers and thus reduced revenue. However, AI companies are funded by venture capital investments, so they don’t need to make profit for now. Still, long-term, it would be wise to reduce misuse of their AI products, meaning 6. the AI products should be designed for more specific uses in mind.

Customer support: difficulty of use, incorrect outputs

The difficulty of use has forced users to become “prompt engineers,” meaning knowing words or phrases to get the best output from AI. Relatedly, AI is sometimes incorrect in its outputs (i.e., it “hallucinates”) because it only outputs combinations of what it was trained on. As a result, because the outputs often do not come with rationale or other sorts of context, using these outputs will cause its users to make professional mistakes. In both cases, customers will need support from the AI companies to figure out how to best use their products. Therefore, to reduce the need for customer support, 7. AI products should be designed to collaborate with users so they know when to trust it or not.

Other Risks: Power Imbalances

The biggest sort of risk that I am interested in are power imbalances. Those who have money and connections to create and run AI companies or purchase products from those companies do so presumably because it serves their primary interest: increasing profits. Even worse, many are immune from government regulations and ignore ethical standards, so they will increase profits at all cost. Therefore, a big part of this is hiding information from their workers and society more generally, keeping the rest of us disempowered to do anything about it. Therefore, 8. AI systems should be designed to be transparent to end users as well as its makers and customers.

Unified Workflow

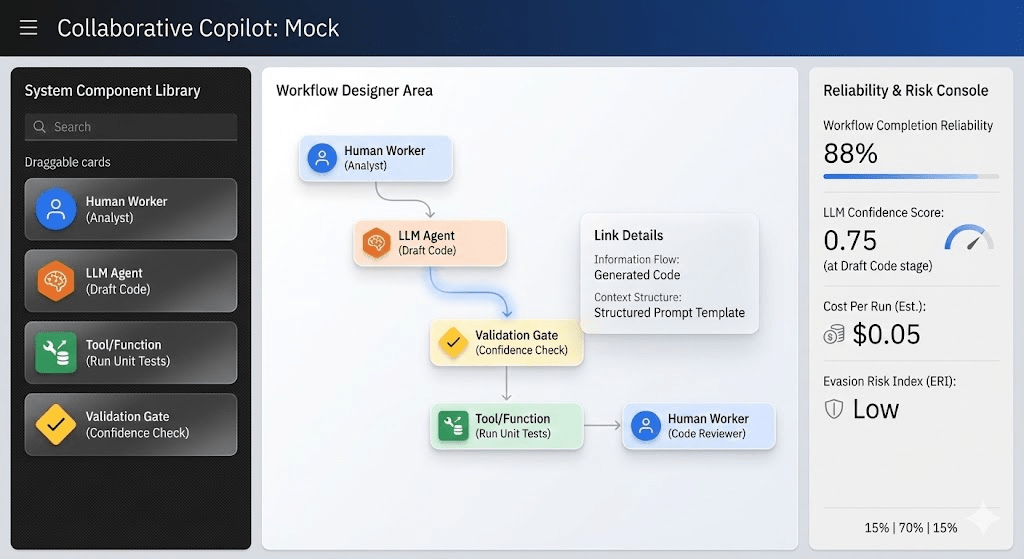

I am looking to combine AI’s benefits and risks into a unified workflow. Ideas to mitigate the above risks include showing its value [1,2] for data processing/automation, showing its costs [1] vs its value, showing its intended uses [2,6], and improving the user interfaces to be more collaborative (e.g., with output rationale) [3,4,5,7,8].

Taken together, I intend to design a unified human-AI workflow design tool (the “Collaborative Copilot”). It will start with humans in-the-loop because AI hallucinations make it not trustworthy, but as tasks are better-defined and domain risks are understood, more automation is possible.